The Neuro-Symbolic Trinity - Ontology, Federated Learning & AIP

This analysis explores the high-impact convergence of three critical technologies defining the next generation of enterprise intelligence - Ontology, Federated Learning, and Artificial Intelligence Platforms.

2026-01-14T00:00:00.000Z

The Neuro-Symbolic Trinity - Ontology, Federated Learning & AIP

For the past decade, the software engineering community has swung like a pendulum between rigid, rule-based systems and the probabilistic magic of deep learning. We spent years building brittle expert systems, only to abandon them for the "black box" power of Neural Networks and Large Language Models (LLMs).

But as we deploy GenAI into critical enterprise infrastructure, we are hitting a wall. LLMs hallucinate. They lack context. They struggle to reason sequentially about proprietary business logic.

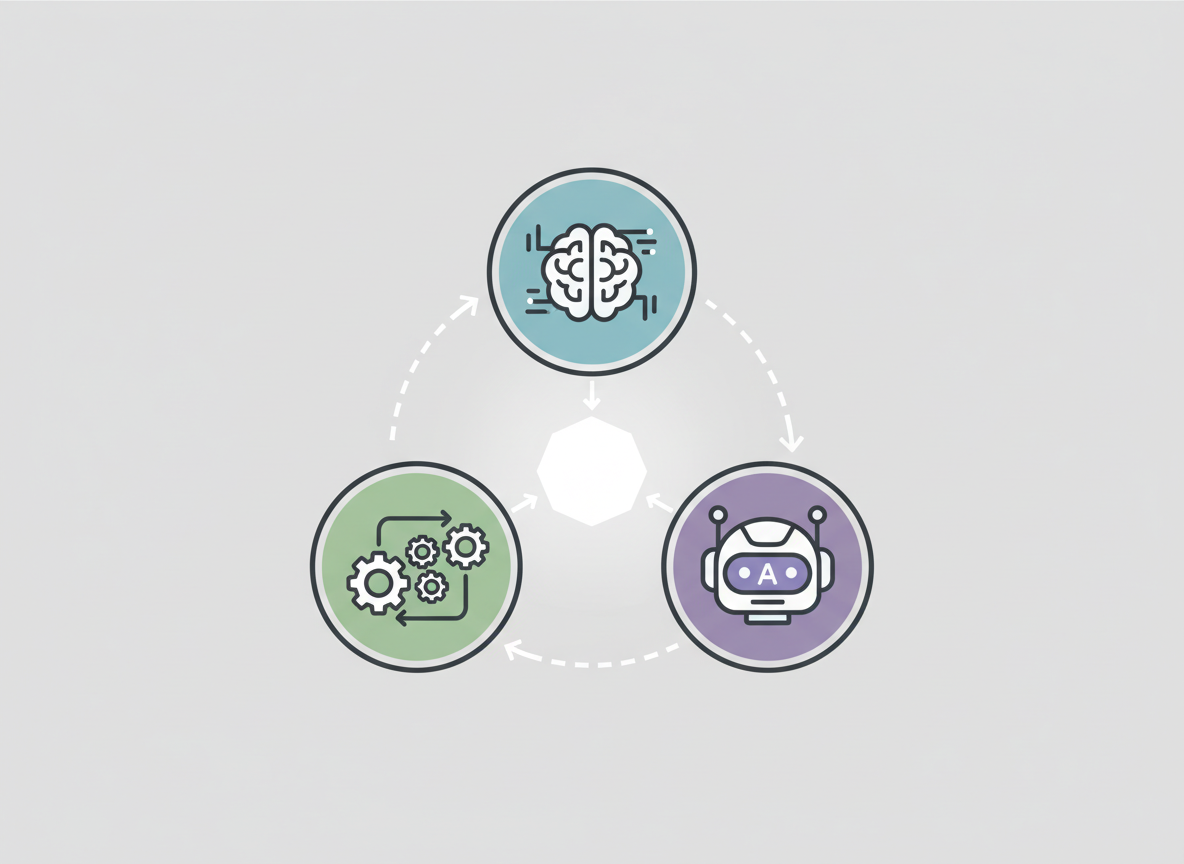

We are now entering the era of Neuro-Symbolic AI. This is not a retreat to the past, but a synthesis. It is the convergence of three architectural pillars: the Ontology (the symbolic representation of truth), Federated Learning (the distributed neural training mechanism), and the AIP (the orchestration layer that binds them).

In this article, we will dissect how these three technologies form a trinity that allows us to build systems that are not just "smart" in the chat-bot sense, but are architecturally sound, secure, and capable of executing complex actions in the real world.

Contents

- The Neuro-Symbolic Renaissance

- The Ontology: The Semantic Backbone

- Federated Learning: Intelligence Without Centralization

- The Convergence: Semantic Alignment in Distributed Systems

- AIP: The Orchestration and Action Layer

- Architectural Patterns for Implementation

- Governance and the Human-in-the-Loop

- Conclusion

1. The Neuro-Symbolic Renaissance

As architects, we often categorize AI into two buckets. System 1 (Neural) is fast, intuitive, and pattern-matching—like recognizing a face or generating a poem. System 2 (Symbolic) is slow, logical, and rule-based—like calculating tax or routing a supply chain shipment.

The "Neuro-Symbolic" approach attempts to fuse these. We use neural networks to perceive the world (unstructured data processing) and symbolic logic to reason about it (structured decision-making).

Why is this necessary now? Because enterprises are drowning in unstructured data but operate on structured rules. An LLM might be able to read a thousand PDFs about turbine maintenance, but without a symbolic framework, it cannot reliably update the Maintenance_Schedule table in an ERP system without risking data corruption. By combining the probabilistic power of AIPs with the deterministic structure of an Ontology, we create a system that can read, reason, and act safely.

2. The Ontology: The Semantic Backbone

The Ontology is the most misunderstood component in modern data stacks. It is often confused with a database schema, but it is fundamentally different. A schema describes data storage (tables, columns, foreign keys); an Ontology describes business concepts (objects, actions, links).

In our architecture, the Ontology serves as the digital twin of the organization. It maps the relationships between entities—Factories, Products, Customers, and Alerts—regardless of where the underlying data lives (Snowflake, S3, PostgreSQL, or legacy mainframes).

The Kinetic Layer

Crucially, a modern Ontology is "kinetic." It doesn't just store the state of an object; it defines the actions allowed on that object.

- Object:

Factory_A - Property:

Output_Rate: 500 units/hr - Action:

Adjust_Output_Rate(new_rate)

By defining these actions symbolically, we give the AI a menu of tools it can use. The AI doesn't write a SQL UPDATE statement; it invokes the Adjust_Output_Rate function defined in the Ontology. This provides a deterministic abstraction layer that prevents the AI from hallucinating database queries.

3. Federated Learning: Intelligence Without Centralization

While the Ontology provides structure, we still need to train models on vast amounts of data. However, in large enterprises—especially in healthcare, defense, or banking—centralizing all data into a single data lake for training is often legally or logistically impossible. This is the problem of "Data Gravity."

Federated Learning (FL) solves this by flipping the paradigm: instead of moving data to the model, we move the model to the data.

In an FL architecture, a global model is sent to local edge nodes (e.g., individual hospitals or regional bank branches). The local node trains the model on its private data and computes the weight updates (gradients). Only these gradients—not the raw data—are sent back to the central server to update the global model.

This allows us to leverage the collective intelligence of the entire network without ever compromising the privacy or sovereignty of the individual nodes.

4. The Convergence: Semantic Alignment in Distributed Systems

Here is where the Trinity comes together. Federated Learning is notoriously difficult if the data at the edge nodes is heterogeneous. If Hospital A records "Heart Rate" as HR_BPM and Hospital B records it as Pulse_Rate, the local models will calculate incompatible gradients, and the global model will fail to converge.

The Ontology solves the heterogeneity problem in Federated Learning.

By enforcing an Ontological standard across the federation, we ensure semantic alignment. Before the local model trains, the local data is mapped to the shared Ontology.

- Raw Data:

Pulse_Rate(Local CSV) - Ontology Map: Maps to

Patient -> Vitals -> Heart_Rate(Global Ontology) - Training: The model trains on the standardized

Heart_Ratefeature.

This allows us to deploy Federated Learning across messy, real-world environments. The Ontology acts as the universal translator, ensuring that the "Neural" part of our system is learning from semantically consistent data.

5. AIP: The Orchestration and Action Layer

With the Ontology providing the map and Federated Learning providing the distributed intelligence, we need an engine to drive the car. This is the Artificial Intelligence Platform (AIP).

AIPs (like Palantir's AIP or custom-built equivalents) act as the binding agent. They host the Large Language Models (LLMs) and provide them with access to the Ontology.

The RAG-to-Action Loop

Traditional RAG (Retrieval Augmented Generation) just retrieves text. In the Neuro-Symbolic Trinity, we implement RAG-to-Action:

- User Prompt: "There is a supply shortage at Plant A. Re-route materials."

- AIP Retrieval: The LLM queries the Ontology. It sees

Plant_A(Object) and itsInventory_Levels(Properties). - Reasoning: The LLM uses its training (potentially refined via FL) to identify the best alternative source.

- Symbolic Action: The LLM calls the Ontology Action

Create_Transfer_Order(Source=Plant_B, Target=Plant_A, Qty=500). - Execution: The system executes the API call, updating the ERP.

This architecture moves us from "Chat with your Data" to "Act on your Data."

6. Architectural Patterns for Implementation

When we engineer these systems, we typically follow a Hub-and-Spoke topology combined with a Sidecar pattern.

The Semantic Sidecar

At every node in the federation (the Spokes), we deploy a "Semantic Sidecar." This is a lightweight container responsible for mapping local raw data to the global Ontology definitions. This ensures that the heavy lifting of data transformation happens at the edge, utilizing distributed compute resources rather than bottling up the central server.

The Aggregation Server

The Hub serves as the Aggregation Server for the Federated Learning process. It maintains the global model state and the master Ontology definition. Crucially, the Hub never sees raw data. It only sees:

- Ontology Metadata (definitions of objects).

- Model Gradients (mathematical weight updates).

- Action Logs (audit trails of what the AIP agents did).

This separation of concerns is vital for security compliance (GDPR, HIPAA, ITAR).

7. Governance and the Human-in-the-Loop

The power of the Neuro-Symbolic Trinity brings significant risk. If an LLM can trigger real-world actions via the Ontology, a hallucination could be disastrous. Therefore, governance cannot be an afterthought; it must be baked into the protocol.

We implement "Human-on-the-Loop" logic within the AIP layer.

- Staging Actions: The AIP does not execute the

Create_Transfer_Orderimmediately. It stages the action in aProposed_Actionsontology object. - Logic Gates: Deterministic rules check the proposal. (e.g.,

IF Transfer_Cost > $10,000 THEN require_human_approval). - Review Interface: A human operator is presented with the context and the proposed action.

- Commit: Only upon digital signature is the action committed to the system of record.

This hybrid approach leverages the speed of AI for proposal generation and the reliability of humans (and symbolic logic) for validation.

8. Conclusion

The future of enterprise software is not purely neural, nor is it purely symbolic. It is the sophisticated integration of both.

By grounding our AI in a robust Ontology, we give it the context it needs to reason. By utilizing Federated Learning, we allow it to learn from data it cannot see. And by orchestrating it through an AIP, we empower it to act.

For us as engineers and architects, the challenge is no longer just "training the best model." The challenge is building the ecosystem where that model can safely, securely, and effectively drive the business forward. The Neuro-Symbolic Trinity is the blueprint for that future.